GENIE: generic inverted index

1 New platforms have been extensively investigated to handle the first two Vs of big data, volume and velocity. Studies into the third V of big data, "variety" is, however, comparatively rare. Many of the big data domains generate a large amount of data with structures (e.g. sequences, trees and graphs) that need to be a process while preserving semantics. It is desirable to develop a unified framework that can be flexibly customized to handle predictive analytics on data with different structures.

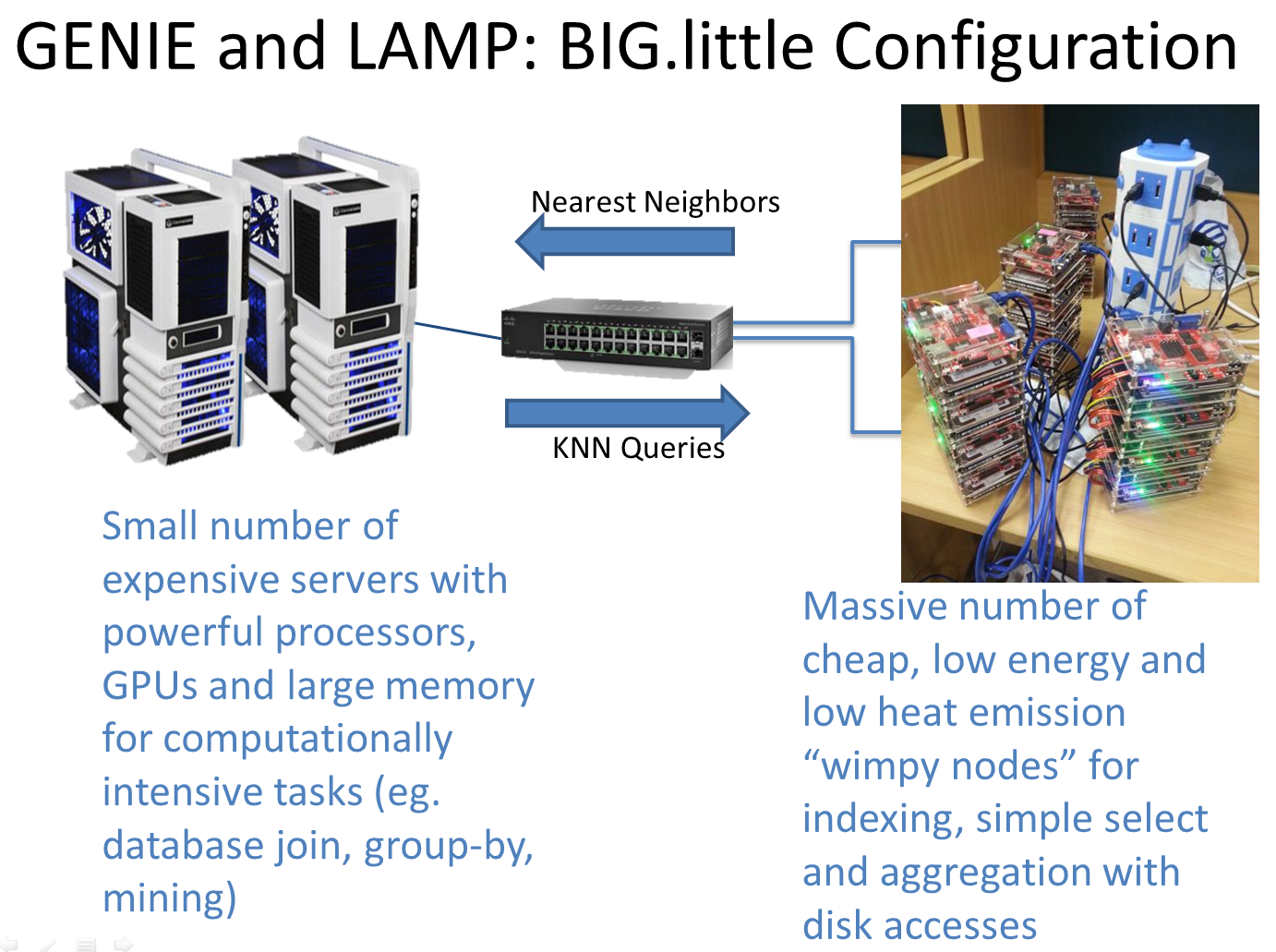

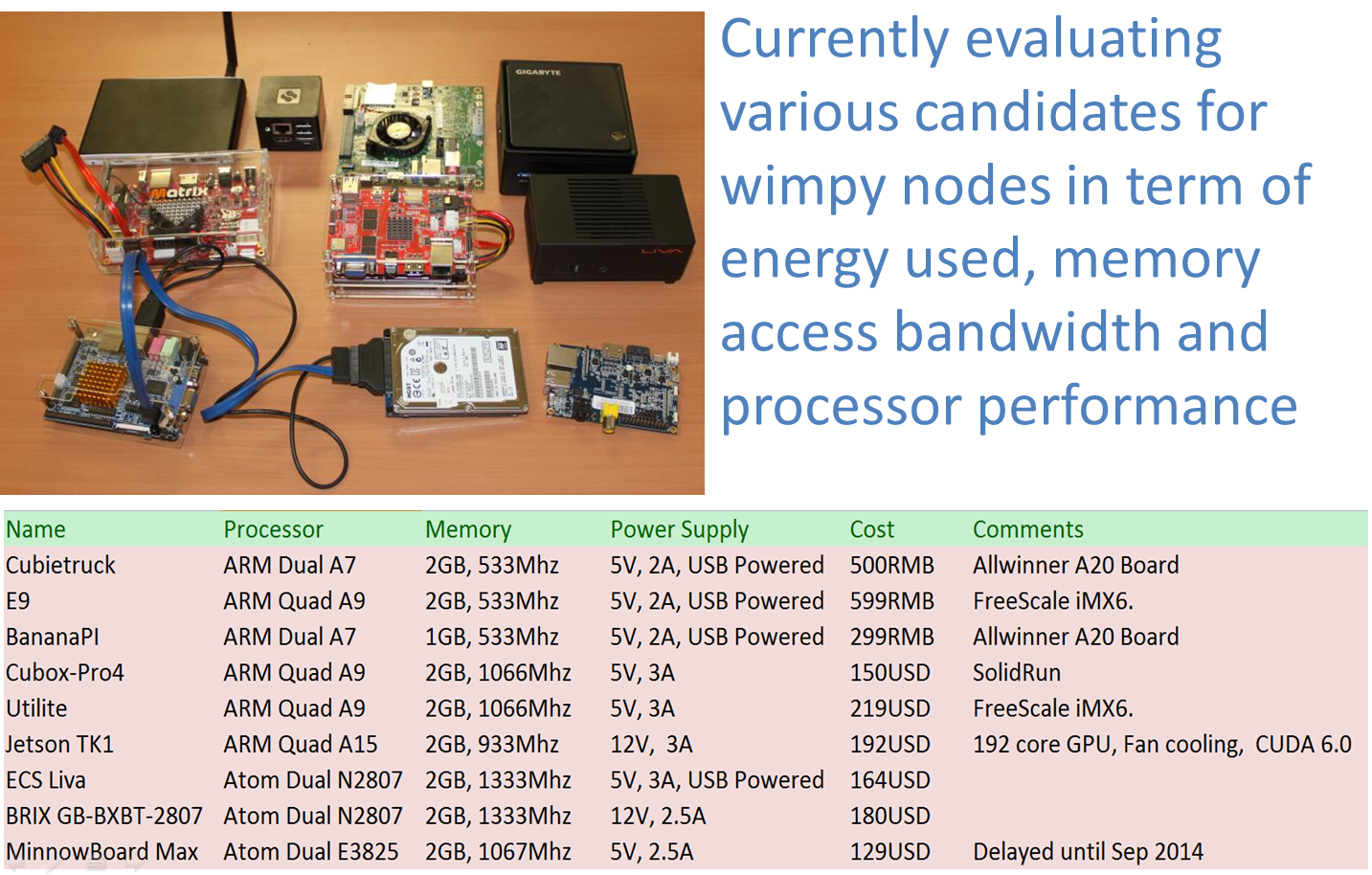

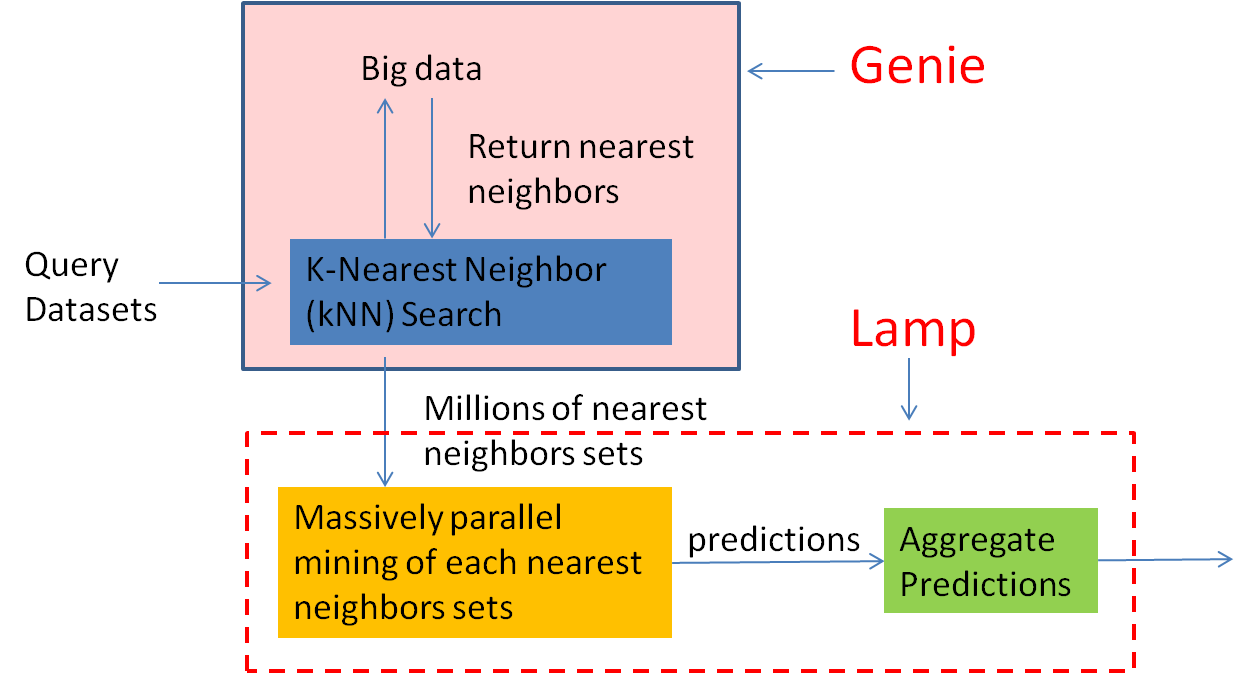

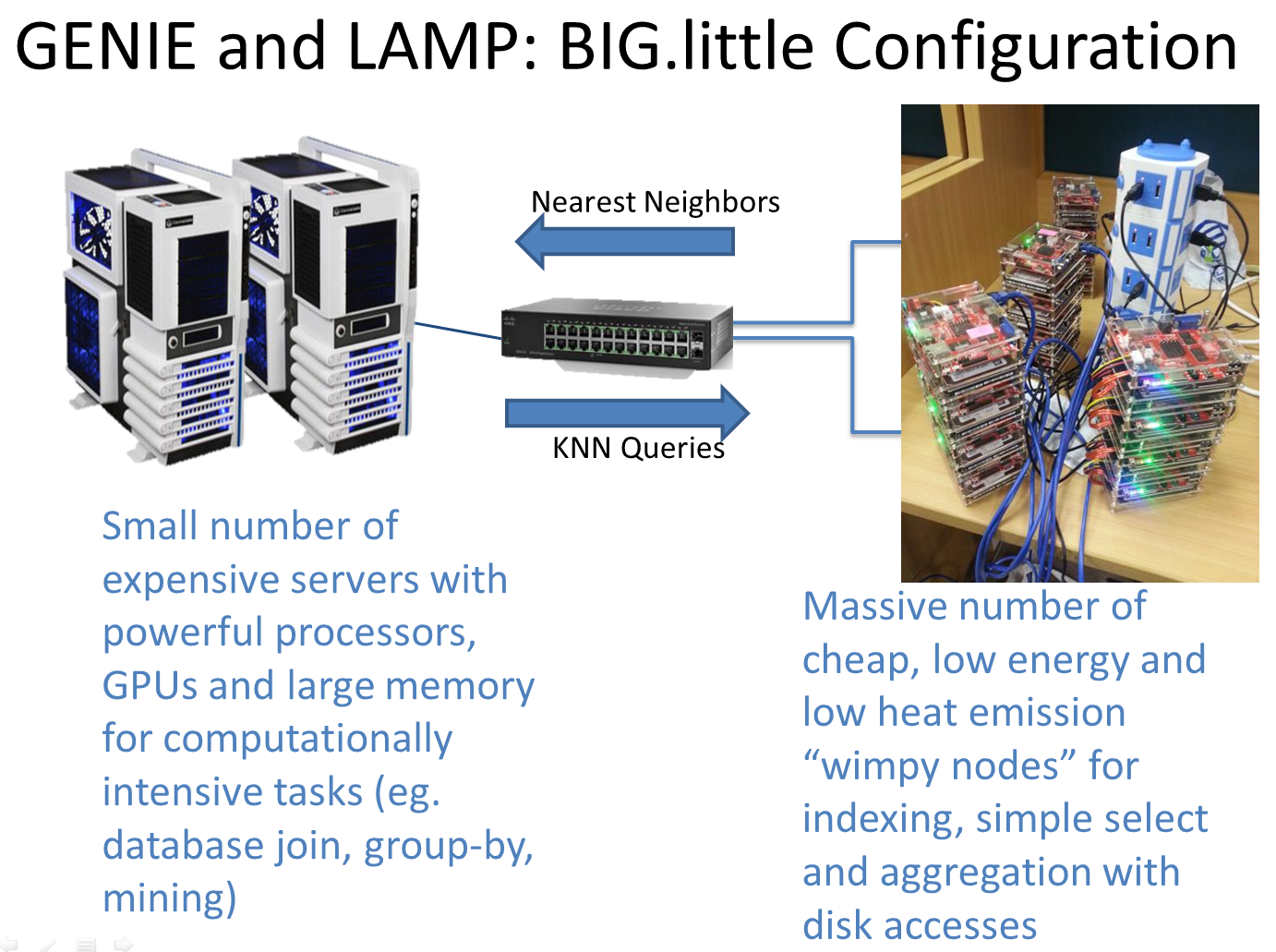

2 Specially, GENIE will efficiently support generic k-Nearest Neighbours (k-NN) search. Since LAMP requires a search for KNNs to make a prediction for a query object, finding KNNs becomes a fundamental operation to support LAMP on Big Data with a large variety of complex structures.

3 The GENIE should have the following characteristics:

- Cater to many different data types including sequences, trees, time series, trajectories, etc.

- Cater to many different similarity functions without rebuilding an index for each function.

- Handle partial similarity, for example, two sequences are similar only because they share a sufficiently large sub-sequences.

- Easily parallelizable using modern commodity hardware and scale up to a large number of concurrent queries.

- Provide a wide variety of configurations (on disk, in-memory, in-GPU) depending on application's need.

LAMP: semi-lazy mining paradigm

1 Data mining algorithms can be divided into two categories: eager learning (e.g. SVM, Neural Network) and lazy learning (e.g. nearest neighbours classification). Eager learning algorithms have high training cost and suffer information loss since they compute and commit to a model before even seeing the prediction query. Lazy learning suffers from their simplistic predicting method although they can capture a very rich set of models and hypothesis from the data. Developing methods that have the strengths but not the weakness of both approaches is highly desirable.

2 LAMP essentially follows the lazy learning paradigm until the last step where more expensive eager learning methods are applied on a set of KNNs. We argue that the modern hardware will allow these eager learning algorithms to be done efficiently on small dataset for real-time prediction.

3 The approach of LAMP goes as follow:

- Like lazy learning, LAMP does not commit to a model before prediction but keep the whole dataset intact.

- Like lazy learning, given a predictive query (typically a data object with some attribute value/s to be predicted), LAMP will find a set of k most similar neighbors(also referred to as k-nearest-neighbours or KNNs).

- LAMP then applies eager learning methods like SVM, neural network on the set of k neighbors to make a prediction.

- Jingbo Zhou, Qi Guo, H. V. Jagadish Wenhao Luan Anthony K. H. Tung; Yuxin Zheng, "Genie: Generic Inverted Index on the GPU"; Technical report, 2015, National University of Singapore

- Yuxin Zheng, Qi Guo, Anthony K. H. Tung; Sai Wu; "LazyLSH: Approximate Nearest Neighbor Search for Multiple Distance Functions with a Single Index"; Proc. of 2016 ACM Int. Conf. on Management of Data (SIGMOD 2016) [to appear].

- Jingbo Zhou, Anthony K. H. Tung; "SMiLer: A Semi-Lazy Time Series Prediction System for Sensors"; Proc. of 2015 ACM Int. Conf. on Management of Data (SIGMOD 2015).

- Xiaoli Wang, Xiaofeng Ding, Anthony K.H. Tung, Zhenjie Zhang; "Efficient and Effective KNN Sequence Search with Approximate n-grams"; The Proceedings of the VLDB Endowment 7(1):1-12 (PVLDB 2014).

- Jingbo Zhou, Anthony K. H. Tung, Wei Wu, Wee Siong Ng; "A Semi-Lazy Approach to Probabilistic Path Prediction in Dynamic Environments"; Proc. of 2013 ACM SIGKDD Int. Conf. on Knowledge Discovery and Data Mining (KDD 2013).

- Jingbo Zhou, Anthony K. H. Tung, Wei Wu, Wee Siong Ng; "R2-D2: a System to Support Probabilistic Path Prediction in Dynamic Environments via Semi-Lazy Learning"; Proc. of 2013 Int. Conf. on Very Large Databases (VLDB 2013). [Demo paper]

Faculty

Anthony K. H. Tung, National University of Singapore

Collaborators

Chen Gang, Zhejiang University

H. V. Jagadish, University of Michigan, Ann Arbor

Shou Lidan, Zhejiang University

Wu Sai, Zhejiang University

Research Fellows

Zheng Yuxin, National University of Singapore

PhD Sudents

Guo Qi, National University of Singapore

Liu Qi, National University of Singapore

Luo Pingyi, National University of Singapore

Interns

Hu Wenyan, National University of Singapore

Luan Wenhao, National University of Singapore

Tang Yixuan, National University of Singapore

Yang Yueji, Wuhan University

Zhou Shaowen, National University of Singapore

Zhu Lei, National University of Singapore

Alumni

Bao Zhifeng, Royal Melbourne Institute of Technology

Wang Xiaoli, XiaMen University

Zhou Jingbo, BaiDu