NUS Computing Team Wins Best Paper Award at MMM 2026

NUS Computing Team Wins Best Paper Award at MMM 2026

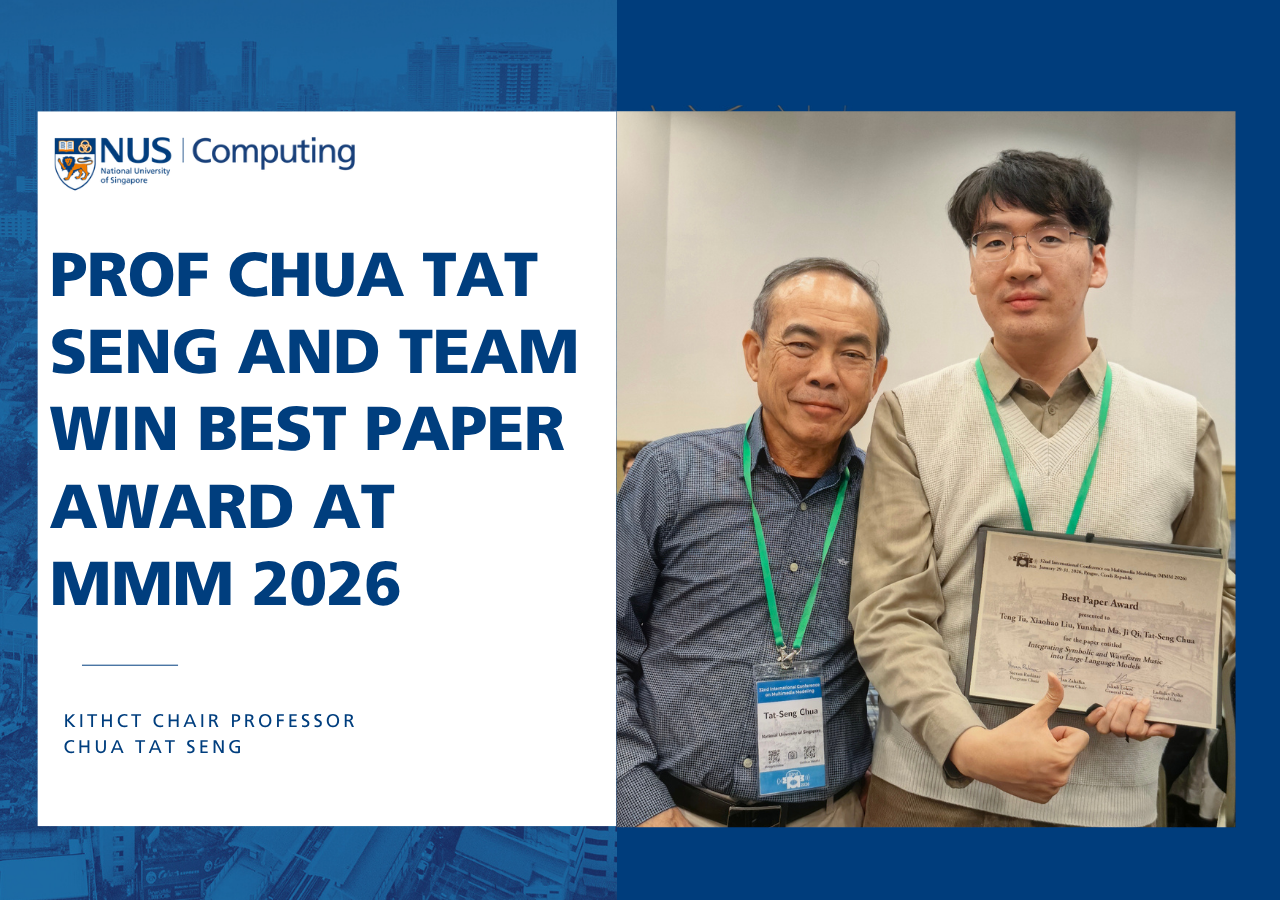

A new model that teaches AI to understand and create music – across audio waveforms, symbolic notation, and text – has won Best Paper Award at the 32nd International Conference on Multimedia Modeling (MMM 2026), held in Prague, Czech Republic from 29 to 31 January 2026.

The paper, Integrating Symbolic and Waveform Music into Large Language Models was authored by Tu Teng and Liu Xiaohao (National University of Singapore), Ma Yunshan (Singapore Management University), Qi Ji (Tsinghua University), and KITHCT Chair Professor Chua Tat Seng.

Most existing music AI models work with either symbolic notation – such as scores and sheet music – or audio waveforms, but not both. The team’s framework, UniMuLM, is described as the first to integrate these two representations within a single language model. It uses a hierarchical encoder that aligns musical information at the beat, bar, and phrase levels, allowing the model to capture both fine-grained detail and broader musical structure.

UniMuLM achieved performance competitive with specialised models on music understanding tasks, while outperforming existing baselines – including GPT-4o – on music theory reasoning and melody completion.

This achievement is a testament to the team's work and to the depth of multimodal AI research at NUS Computing – where the question isn't just how machines process information, but how they might one day understand something as deeply human as music.