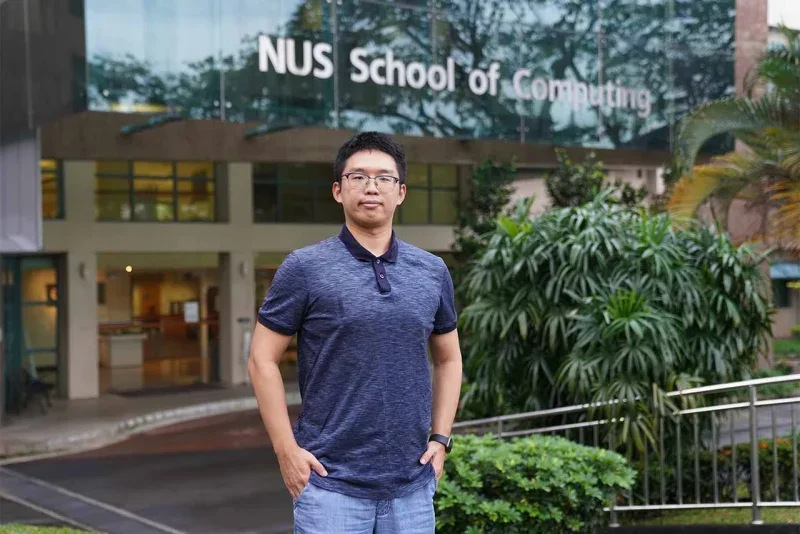

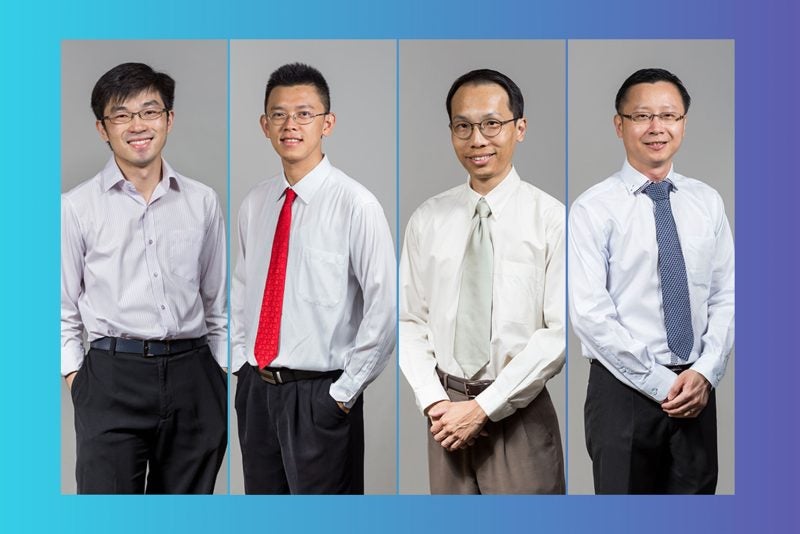

30 April 2022 — NUS Computing Assistant Professor Brian Lim and Computer Science PhD student Zhang Wencan have won the Best Paper Award at the ACM CHI Conference on Human Factors in Computing Systems, which will take place on 30 April to 5 May this year.

Dr Lim and Zhang won the award for their paper on “Towards Relatable Explainable AI with the Perceptual Process”. The Best Paper Award is only given to the top 1% of papers at the conference.

The conference is the most prestigious in the field of human-computer interaction, and is one of the top-ranked conferences in computer science.

Having explanations in artificial intelligence (AI) is important to build trustworthy and understandable machine learning systems, as people often seek to understand why a puzzling prediction occurred, instead of an expected outcome.

However, explaining non-visual or abstract predictions by AI is difficult since current explanations are too technical and not relatable enough to what we are familiar with.

In their paper, Dr Lim and Zhang drew inspiration from the human psychology theory of Perceptual Processing to define a unified framework for relatable explanations in AI.

“To create interpretable AI models that are human-like, we implemented a deep learning model, RexNet, to explain outcomes with relatable examples, attentions, and cues. For example, our system can explain why an AI predicted that a person’s voice was happy and not sad by synthesising a sad version of the speech, identifying where to focus on, and describing how their cues differed, such as the higher volume and higher pitch in a person’s tone when he or she is happy. This provides human-relatable explanations to improve the understanding and trust of AI for many applications,” said Dr Lim.

He believes that their technical approach to the problem, grounded in human cognitive psychology, as well as their novel technical solution for the significant problem of explainable AI, helped their paper to stand out.

“We performed rigorous qualitative and quantitative user studies with resounding results to validate the benefits of our explanations. It formalises relatable explanations for AI, and was the first to provide intuitive explanations for non-visual, audio applications,” said Dr Lim.