17 November 2021 – Five NUS Computing PhD students were recently awarded the Google PhD Fellowship Program 2021. Shen Li, Soundarya Ramesh, Teodora Baluta, Tianyuan Jin and Qinbin Li were awarded the Fellowships, which are awarded to graduate students doing exceptional and innovative research in computer science and related fields, such as algorithms, human-computer interaction, machine learning and mobile computing.

According to Google, the program supports promising PhD candidates of all backgrounds who seek to influence the future of technology. Fellows will receive USD$10,000 a year for up to three years for their research-related activities and travel expenses, and will also be paired with a Google Research Mentor. This is the first iteration of the program in Southeast Asia.

Making machine learning more trustworthy

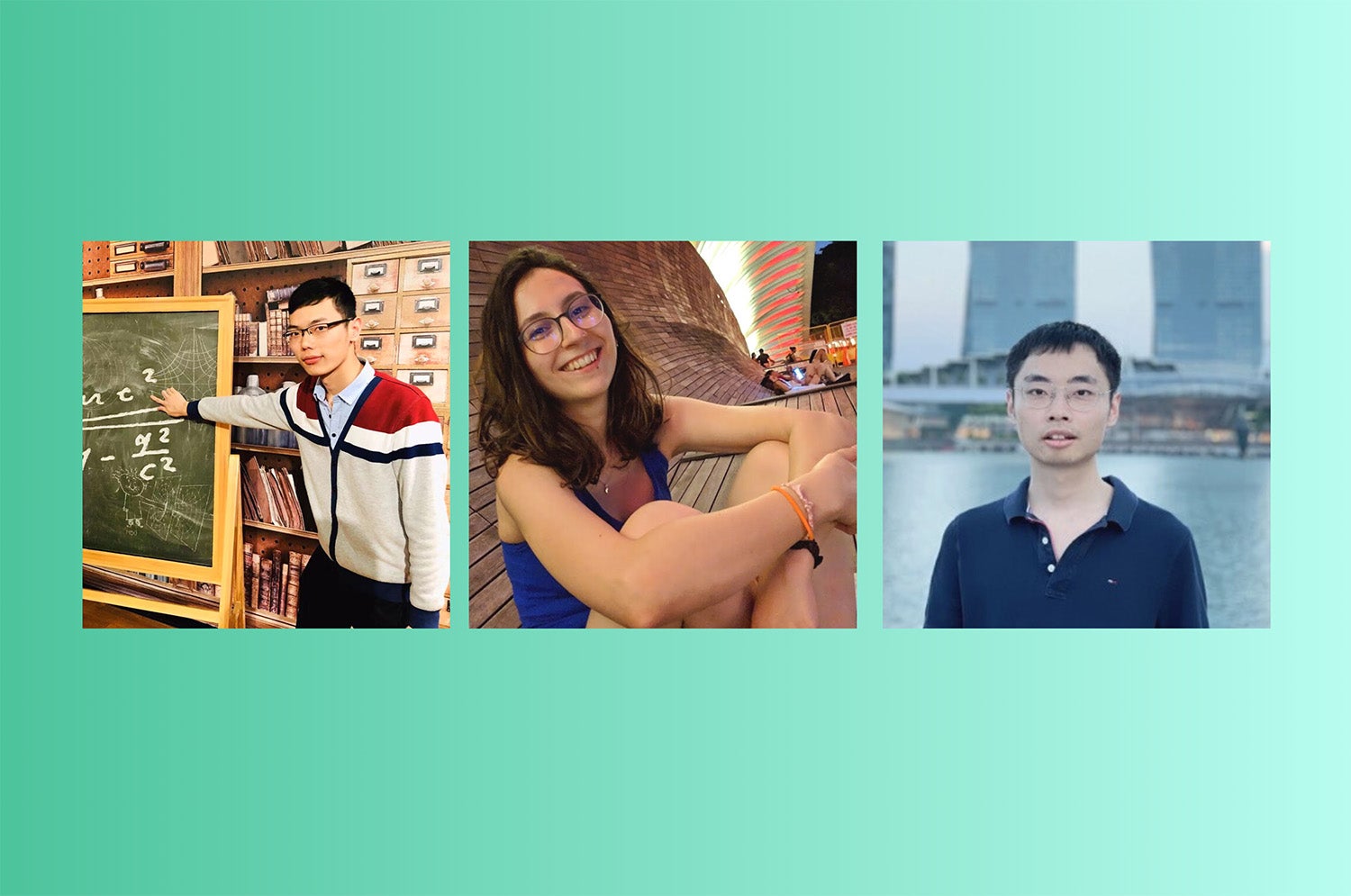

Teodora Baluta, a Year 5 PhD student in Computer Science, intends to investigate the desirable properties of trustworthy machine learning, as proposed in her application for the Google PhD Fellowship.

“Machine learning – and especially deep learning – is used for a wide range of applications these days, but a part of the issue with adopting this technology is that it is difficult to trust these “black-box” systems. They can be easily fooled into predicting a certain output, they may produce biased and unfair results, and we can even extract sensitive information from them – all of which can have grave consequences. For example, not getting a bank loan because of bias,” explained Baluta.

Baluta, who is supervised by Assistant Professor Kuldeep S. Meel and Associate Professor Prateek Saxena, said she was initially focusing on neural networks for automated binary analysis.

“We realised that even though the performance (as measured by accuracy) was high, we could not readily use the neural networks because they were not learning the “right” function. In some domains, that may be acceptable but the question that arose then was how much are neural nets behaving as intended? So I started from machine learning for security and focused on security for machine learning,” said Baluta.

Baluta believes that her cross-domain research involving security and privacy, machine-learning and formal methods, helped her proposal stand out.

“Another aspect is that my research proposal addresses a timely and increasing concern as the emphasis on AI grows – for example, ensuring autonomous car safety standards and enacting privacy laws such as GDPR – which aligns very well with Google’s research directions,” she added. “I wanted to get exposure to research in the industry – and being able to work directly with a research mentor at Google is a great way to do that!”

Towards effective, efficient, and private federated learning algorithms and systems

For his research, Qinbin Li, a Year 3 Computer Science PhD student, set out to improve federated learning.

In machine learning, a computer system identifies patterns in data from various devices, and helps make decisions, or predict outcomes based on data collected. However, this means that the system is also accessing sensitive data from various devices and uploading them to one server, which could potentially give rise to data privacy and security issues.

Federated learning is a decentralised form of machine learning that extracts the training results – and not the sensitive data – and enables researchers to build better machine learning models without sharing or exchanging sensitive data.

“My existing studies address the following important directions in federated learning: effective federated learning on non-IID data, communication-efficient and private federated learning, benchmarks and systems,” said Li. Non-IID data refers to non-independent and identically distributed data.

In his research, Li proposes two novel approaches which develop effective federated learning algorithms for gradient boosting decision trees and deep neural networks. These algorithms have significantly outperformed those in other studies, he added.

He also proposed a new approach which only needs one communication round with flexible privacy guarantees for communication-efficient and private federated learning.

“Besides algorithms, I am also developing benchmarks and systems. The benchmark provides thorough non-IID data partitioning approaches and comparisons between existing federated learning algorithms. While existing federated learning systems mostly focus on deep learning, the FedTree system is designed for tree-based models. It is highly efficient, effective, and secure, which will benefit the machine learning community a lot,” explained Li.

Li also intends to delve deeper into federated self-supervised learning, which he says “is still not well investigated”.

“An effective federated self-supervised learning algorithm can make federated learning much more powerful,” he added.

Li, who is supervised by Associate Professor He Bingsheng, said he hopes to make a direct impact on Google’s users with his research.

“It is possible that I can apply the proposed algorithms and systems to more users through the platform of Google, which can make the study more impactful. Moreover, through collaboration with Google, I understand the challenges in the real-world scenarios better, and develop more practical algorithms and systems to address these challenges,” said Li.

When asked if he had any words of advice for students who are thinking of pursuing a PhD, Li said: “I think persistence is an important skill during the PhD journey. Our paper may be rejected many times, but we should always be confident about our work and ourselves.”

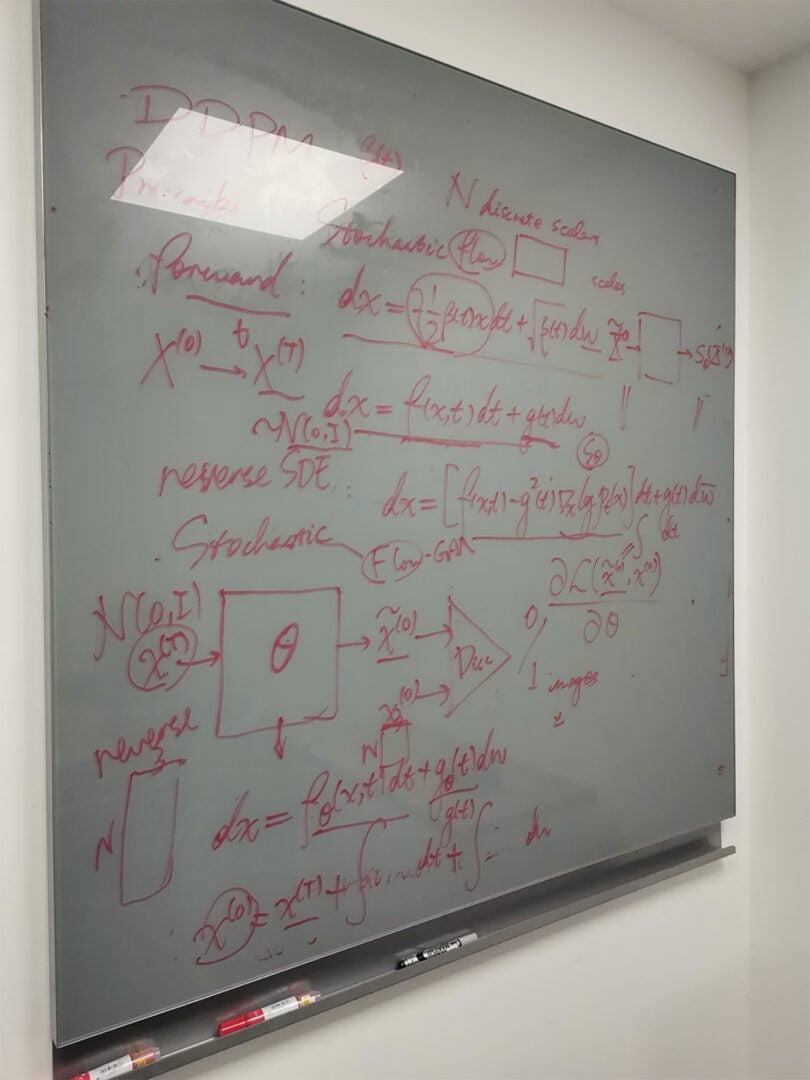

Deep Generative Modelling and Face Recognition

Third-year Institute of Data Science PhD student Shen Li’s research focus lies in deep generative modelling, in which he aims to establish a generative process of various forms of data, such as images, texts and videos.

His research work touches on identifiability of deep generative models. In his research, the models achieves identifiability in a rigorous manner rather than through variational approximation, Li said.

“Another research work of mine involves face recognition, which marries variational methods (a method that is typically used in generative models) with discriminative models,” explained Li.

Li is enrolled in the Integrative Sciences and Engineering Programme (ISEP) at the NUS Graduate School, and is supervised by NUS Computing Assistant Professor Bryan Hooi.

“Prof Hooi’s modesty and profound knowledge about mathematics and computer science ignited my enthusiasm for research. Our frequent discussions at the beginning of my matriculation triggered the idea for this research proposal and (he) has been very instrumental throughout my PhD journey at NUS. I am really grateful for that,” said Li.

Li also expressed his gratitude for the guidance from his mentors, NUS Computing Assistant Professor Lee Gim Hee and Professor Xilin Chen from the University of Chinese Academy of Sciences (UCAS).

Li also highlighted how his senior, whom he met at UCAS, was also instrumental in his learning and shaping his research focus.

“When I was at UCAS, I remember one of my lecturers stressing the importance of research niches. He said, ‘There are thousands of millions of research questions in the world. But you don’t have to solve them all. You have to know where your research niche lies and focus solely on whatever is relevant’,” Li explained.

Keeping this in mind, Li said he was spurred “to create something new in a theoretically-grounded manner”.

“Our research output largely benefitted from this idea, and I am really glad to see that my research has been recognised by Google, and the Conference on Computer Vision and Pattern Recognition (CVPR) community,” he added.

Making online decision-making more efficient

For his research in machine learning, Computer Science PhD student Tianyuan Jin tackled important problems concerning multi-armed bandits (MAB), unconstrained submodular maximisation, and single-source SimRank.

The MAB problem refers to a problem where there is a fixed set of resources that must be allocated amongst choices in a way that maximises their gains. The problem is similar to a gambler choosing which slot machine to play, how many times they want to play and the order in which to play them.

Jin’s work in machine learning has been published in various top conferences such as NeurIPS 2019 and the International Conference on Machine Learning (ICML) 2021. In his research, he developed algorithms that solved many important problems in the area of machine learning, such as batched MAB, streaming MAB, and Thompson Sampling. Thompson Sampling refers to an online decision making algorithm that tackles the exploration-exploitation dilemma in the MAB problem.

“For the batched bandits, we have a problem of how to make use of information parallelism in online decision making while efficiently balancing the exploration-exploitation trade-off. I devised algorithms that achieve the optimal regret/sample complexity while using a minimum number of paralleled batches. For streaming bandits, I devised an algorithm that uses only a single arm memory while achieving the optimal sample complexity for the best arm identification problem. The results show that there exists no trade-off between the sample complexity and the space complexity. As for Thompson sampling (TS), I devised a variant of TS and proved that it is optimal on both minimax and asymptotic regret, and showed that it empirical outperforms the state of the art,” he explained.

“Winning the Google PhD Fellowship is a great honour. It is an incredible recognition of the importance of my work. This will also provide a big encouragement and support for my future work,” said Jin, who also attributed his success to his PhD supervisor, Associate Professor Xiao Xiaokui.